Breaking down Figma AI Report 2025

The 31-point gap nobody's talking about in Figma AI Report 2025.

Hey there! This is a 🔒 subscriber-only edition of AI First Designer (by ADPList) 🔒, to help designers transition into successful AI-First builders. Members get access to proven strategies, frameworks, and playbooks.

For more: 🏛️ Get free 1:1 mentorship | ⭐️ Be first to win in career as an AI-First Designer | 📘 Claim your AI Design Guide for free

Friends,

Today’s post will give you a preview of 2026.

The 31-point gap nobody’s talking about on (Figma AI Report 2025).

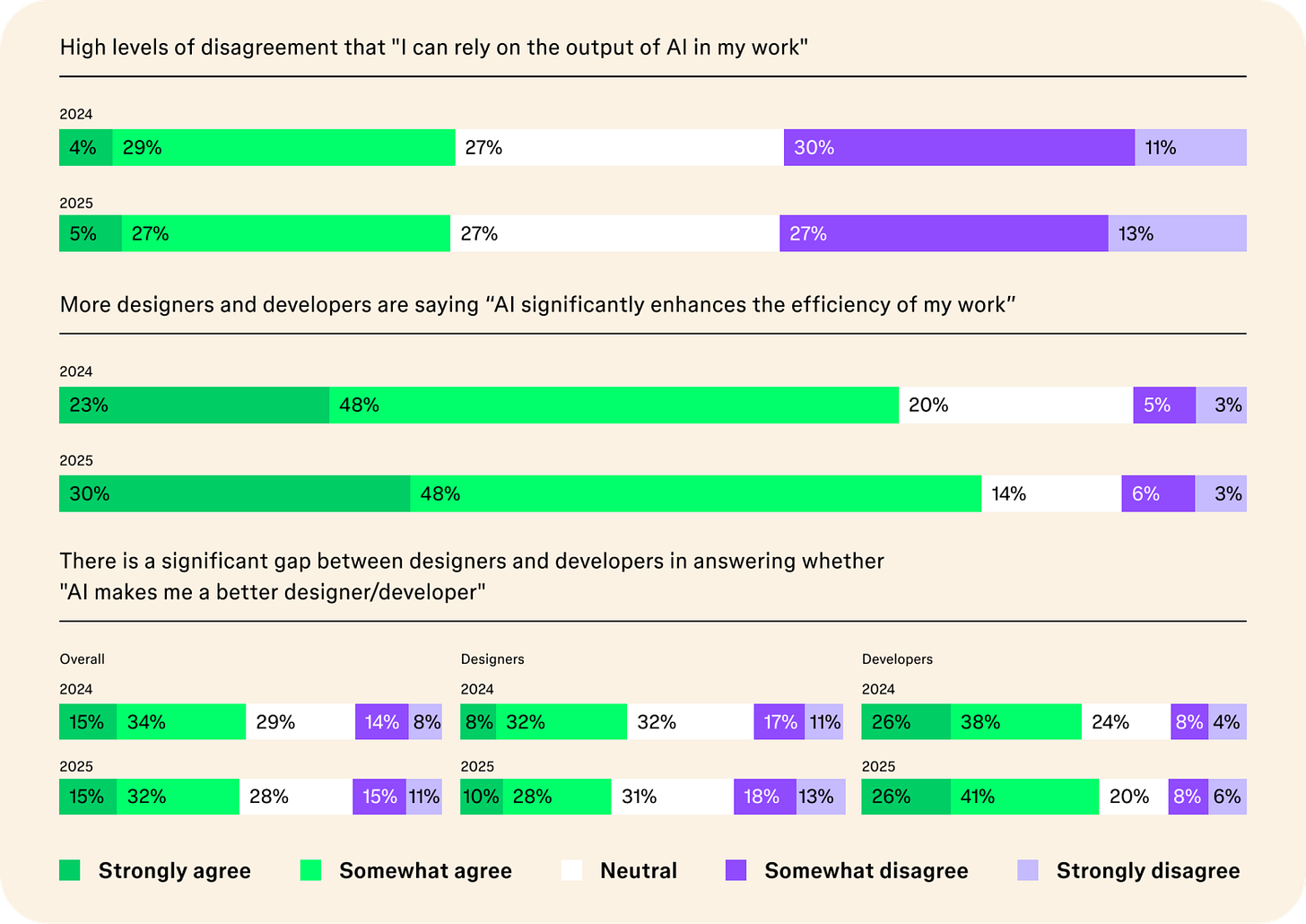

Here’s the most revealing stat from Figma’s latest AI Report: 78% of designers say AI makes them more efficient, but only 47% say it makes them better at their role.

That 31-point gap tells you everything about where we are with AI in design right now.

Over 3,000 designers and developers shared how they’re actually using AI in 2025—not the hype, not the speculation, but the messy reality of shipping AI-powered products. And the data reveals something surprising: the teams winning with AI aren’t the ones using it the most. They’re the ones who fundamentally changed how they work.

More Figma users are shipping AI products than last year.

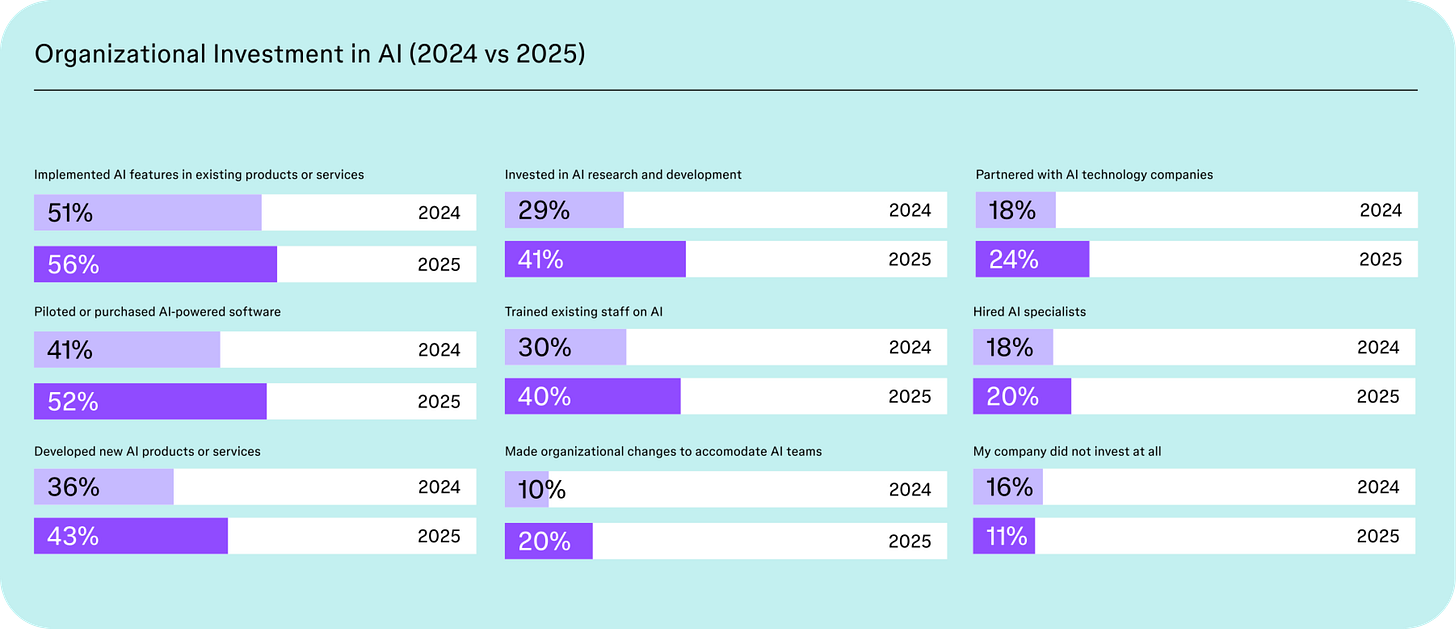

This year, 34% of Figma users say that they’ve shipped applications and software that includes generative AI compared to 22 % last year. Meanwhile, 56% of Figma users report their companies are integrating AI into their existing products this year, and 43% are creating new products with AI capabilities .

Let me break down what actually matters in this report.

The Trust Problem Is Real (And Getting Worse)

Only 32% of designers trust AI output. This is the lowest score across every metric Figma measured.

Think about that for a second. One in three designers trusts what AI produces. Yet most of us are using it daily. We’re in this weird limbo where AI is embedded in our workflows, making us faster, but we’re constantly second-guessing everything it produces.

The trust gap gets even wider between disciplines. 68% of developers say AI improves their work quality. Only 40% of designers agree.

Why the disconnect? Design work is fundamentally more subjective. A developer can run tests to verify AI-generated code works. A designer? You’re evaluating if a layout “feels right” or if copy “sounds on-brand.” That’s harder to verify, harder to trust, and harder to defend to stakeholders.

This creates a real problem: If you can’t trust the output, you spend more time reviewing than you save by generating. The efficiency gains evaporate.

The Paradox: More AI Products, Same Expected Impact

Here’s what should make you pause:

The percentage of teams shipping AI products jumped from 22% to 34%—a 55% increase. But the number of people who believe AI will significantly impact their work barely moved: from 23% to 27%.

Translation: We’re shipping more AI features, but we’re not more convinced they matter.

This isn’t necessarily bad news. It might actually signal something healthy: designers are moving past the hype phase into the “let’s see what actually works” phase. The novelty is wearing off. We’re getting pragmatic.

But it also suggests something troubling: many teams might be shipping AI features because they feel they have to, not because they’ve solved real user problems.

For small companies, this pressure is intense. The importance of “AI features” for market share tripled among smaller teams. If you’re competing for attention and funding, you need an AI story. Whether that AI actually improves your product is almost secondary.

The Real Shift: From Copilots to Agents

While everyone was focused on “AI is making us faster,” the real transformation happened quietly in the background.

The percentage of teams building agentic AI more than doubled—from 21% to 51%.

This is the actual story. We’re moving from AI as a tool you direct (copilot) to AI that can complete multi-step tasks independently (agent). That’s not an incremental change. It’s a fundamental shift in how products work.

What this means for designers:

You’re no longer designing interfaces for humans to complete tasks. You’re designing systems where AI completes tasks and humans verify, redirect, or intervene. The entire interaction model is inverted.

Most designers aren’t ready for this. Our training, our processes, our tools—they’re all built for human-directed interfaces. Agentic AI requires designing for delegation, error handling, and trust calibration in ways we haven’t systematized yet.

“52% of designers say design becomes MORE important for AI products”

This tracks. When the AI is doing the heavy lifting, the interface design becomes critical. It’s the difference between AI that feels magical and AI that feels frustrating.

The One Thing Successful Teams Do Differently

Here’s the data point that matters most:

75% of successful AI products came from teams with tight design-development collaboration.

But here’s the kicker: 81% of teams whose process was “significantly different” from their usual workflow were successful.

Connect those dots. The winning formula isn’t just collaboration—it’s changing how you collaborate.

What “significantly different” actually looks like:

Based on the patterns in successful teams, here’s what changed:

Earlier technical involvement: Designers and developers are pairing during exploration, not just handoff. When you’re designing for AI capabilities, you need to understand what’s actually possible—not just what’s theoretically cool—before you commit to a direction.

Faster iteration cycles: AI features are harder to get right through traditional specs. Successful teams are prototyping with real models, testing with actual output, and iterating based on what the AI actually does—not what they hoped it would do.

Shared evaluation criteria: When 68% of developers think AI improves quality but only 40% of designers agree, you need explicit conversations about what “good” looks like. Successful teams defined these criteria together, upfront.

New review processes: That 32% trust problem? Successful teams built new review workflows specifically for AI-generated content. They’re not treating AI output the same as human work.

The Skills Gap Nobody’s Preparing For

85% of designers say learning AI skills is essential to their future success.

But here’s what the report doesn’t say: most designers are still figuring out what those skills even are.

Is it prompt engineering? Understanding model capabilities? Designing evaluation frameworks? Training data literacy? All of it?

The honest answer: we don’t know yet. The field is moving too fast.

But here’s what we do know:

The designers who are succeeding with AI aren’t necessarily the most technical. They’re the ones who are comfortable with uncertainty, who iterate quickly, and who’ve built strong cross-functional relationships.

Those “soft skills” everyone said were important? They’re now table stakes. You can’t ship good AI products in a silo. The gap between design vision and technical reality is too large to bridge alone.

What To Actually Do With This Data

If you take nothing else from Figma’s report, take these three actions: